The Stars Our Destination: SpaceX Files for IPO

SpaceX’s S-1 hit the SEC on 20 May 2026. A $20 billion bridge loan forces the IPO, xAI and X have been folded in, and Starlink is paying for everything. The deal is a referendum on whether the equity market can still finance voyages into the unknown.

A Chronicle Tale of Nvidia Investors

Six investors embark on a journey as a once-in-a-generation opportunity unfolds. A tale of vision, patience, discipline, and learning the hardest part of investing.

Berkshire’s Cash Pile Tells Nothing about the Market

$397 billion in cash is not a market signal. It is the receipt for the largest missed opportunity in modern investing history.

Australia RBA Cash Rate: Air Conditioning with the Window Open

The RBA has hiked three times in 2026 while most central banks cut or hold. Australia’s inflation is supply-driven — and rate hikes do not fix supply shocks.

The AI Capex Machine: When Does It Stop?

The four largest hyperscalers have committed $700 billion in AI capex for 2026. We model when the cash flow constraint forces the first guidance cut — and what reprices when it does.

Reading the Macro Page — A Primer

A guide to the neucore.ai macro page — how the macro score, market regime, sentiment index, and backtest chart work together to describe the U.S. equity backdrop.

A Negative-Sum Game, Being Reshaped by AI

Sharpe's arithmetic makes short-term trading negative-sum after costs — and AI does not break the math. It concentrates the winners, homogenises the losers, and grows the rake.

The Next Crowded Trade Will Be Built by Machines

AI agents are democratising quantitative trading — but if everyone's agent finds the same alpha, the oldest systemic risk in markets arrives through a faster channel.

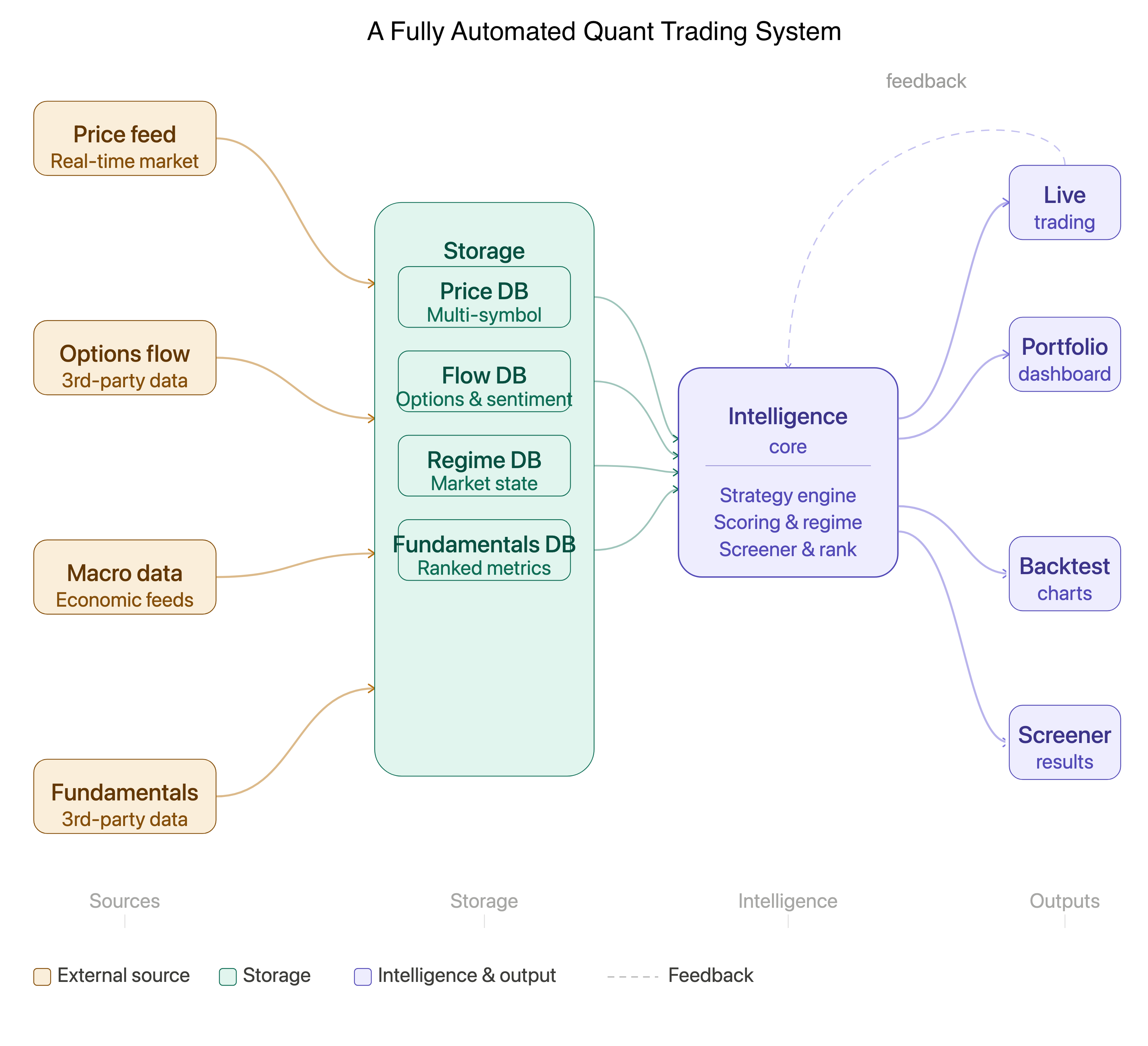

Lessons Learnt by Working with Anthropic's Claude Code in Software Development

Five weeks, one developer, an end-to-end quantitative trading system. Reflections on what AI coding agents do brilliantly, where they fall short, and why human judgment still matters.